Why Data Cleaning Has Become Critical in Modern Market Research

Market research is often judged by the quality of its insights. But long before insights are generated, dashboards are created, or reports are delivered, there is another process quietly determining whether the research itself can actually be trusted: data cleaning.

In modern research environments, collecting responses is no longer the hardest part. The real challenge is ensuring that the data flowing into research systems is:

- authentic

- reliable

- consistent

- analytically usable

As online surveys, digital panels, and large-scale data collection continue to expand, market research teams are increasingly dealing with:

- duplicate responses

- incomplete records

- AI-generated answers

- low-engagement participation

- inconsistent respondent behavior

- invalid data patterns

Without proper data cleaning, even large research studies can become statistically unreliable and methodologically weak.

This is why data cleaning in market research is no longer viewed as a secondary operational step. It has become one of the most critical processes in maintaining research quality and analytical integrity.

What Is Data Cleaning in Market Research?

Data cleaning refers to the process of identifying, correcting, filtering, or removing inaccurate, inconsistent, duplicate, or low-quality data from research datasets before analysis begins.

In market research, data cleaning helps ensure that collected responses are:

- valid

- complete

- logically consistent

- methodologically defensible

The process is sometimes also referred to as:

- database cleaning

- database cleansing

- data base cleaning

- data base cleansing

Although terminology varies, the objective remains the same:

Improving the reliability and usability of research data

Data cleaning is particularly important in online research environments where response quality can vary significantly across participants and recruitment sources.

Why Data Cleaning Matters in Market Research

Poor-quality data can compromise every stage of the research process.

Even small volumes of unreliable participation can introduce noise into:

- segmentation studies

- trend analysis

- behavioral modeling

- statistical outputs

- qualitative synthesis

- longitudinal tracking

In some cases, datasets may appear large and complete while still containing substantial volumes of low-authenticity or invalid participation.

This creates a dangerous situation where analytical outputs may appear statistically sound despite being built on unreliable underlying data.

Industry discussions increasingly describe poor-quality participation and insufficient data cleaning as major threats to modern online research reliability.

The Growing Complexity of Data Cleaning

Historically, data cleaning was relatively straightforward.

Researchers typically focused on:

- missing responses

- duplicate records

- obvious outliers

- incomplete surveys

But modern market research environments are far more complex.

Today’s datasets often include:

- AI-generated responses

- behavioral inconsistencies

- panel overlap

- coordinated participation behavior

- synthetic respondent patterns

- multilingual responses

- unstructured qualitative data

As research becomes increasingly digital and large-scale, the complexity of database cleansing continues growing rapidly.

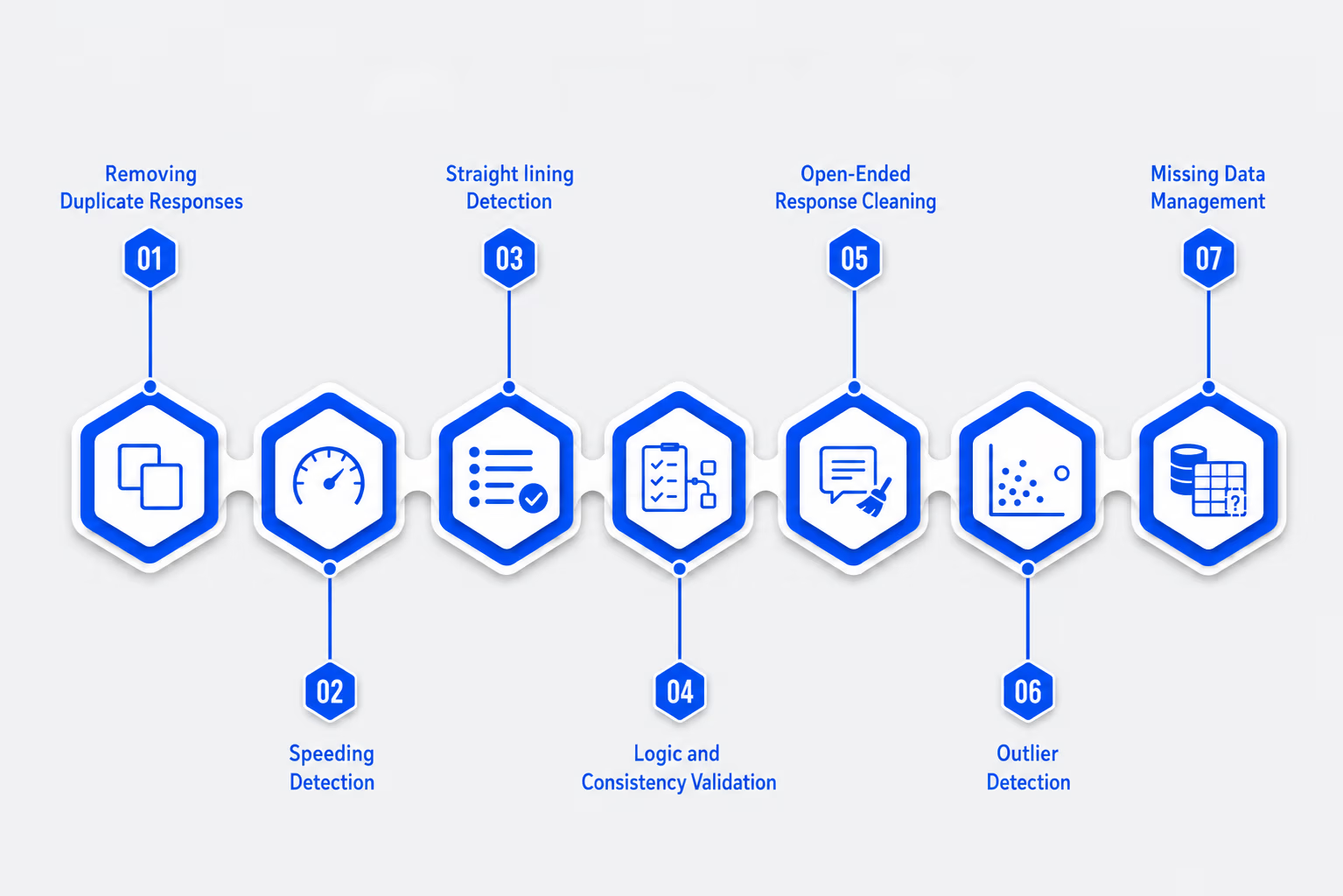

Common Data Cleaning Techniques Used in Market Research

Modern research teams use multiple techniques simultaneously to improve dataset reliability.

1. Removing Duplicate Responses

Duplicate participation remains one of the most common data quality issues in online surveys.

Researchers identify duplicates through:

- IP matching

- device fingerprinting

- email verification

- behavioral similarity analysis

Duplicate removal helps preserve sample integrity and prevent response inflation.

2. Speeding Detection

Some respondents complete surveys unrealistically quickly without meaningful engagement.

Researchers often establish minimum completion thresholds based on:

- survey length

- question complexity

- expected reading time

Responses completed significantly faster than realistic engagement benchmarks are often flagged for review or removal.

3. Straightlining Detection

Straightlining occurs when participants repeatedly select the same response pattern across multiple questions.

Researchers analyze:

- matrix response repetition

- lack of answer variability

- unusual consistency patterns

This helps identify disengaged or low-attention participation.

4. Logic and Consistency Validation

Data cleaning frequently involves checking whether responses remain logically consistent throughout the survey.

Examples include:

- contradictory demographic answers

- unrealistic age and income combinations

- inconsistent behavioral responses

Consistency checks help identify invalid or unreliable participation.

5. Open-Ended Response Cleaning

Qualitative responses increasingly require deeper validation due to the rise of AI-generated participation.

Researchers now review open-ended responses for:

- repetitive phrasing

- semantic inconsistency

- low contextual depth

- copied language patterns

- AI-generated response indicators

Open-ended cleaning has become one of the fastest-growing areas of modern data quality management.

6. Outlier Detection

Researchers identify statistical outliers that may distort analysis.

This includes:

- unrealistic spending patterns

- abnormal response distributions

- impossible behavioral claims

Outlier management helps improve analytical reliability.

7. Missing Data Management

Incomplete datasets remain common in survey research.

Researchers must decide whether to:

- remove incomplete records

- impute missing values

- retain partial responses

The correct approach depends on study design and methodological requirements.

Why Database Cleaning Is Becoming More Difficult

Several major industry shifts are making data cleaning significantly more challenging.

AI-Generated Responses

Generative AI systems can now produce responses that appear:

- grammatically polished

- contextually coherent

- emotionally structured

This makes low-quality participation harder to identify manually.

Traditional database cleansing techniques were not designed for AI-assisted participation environments.

Larger and Faster Datasets

Modern online studies often involve:

- thousands of respondents

- multiple recruitment channels

- global audiences

- overlapping panels

As datasets grow larger, manual cleaning becomes increasingly impractical.

Unstructured Data Growth

Today’s market research increasingly includes:

- open-ended responses

- interview transcripts

- discussion-based research

- social listening signals

Cleaning unstructured qualitative data is substantially more complex than cleaning structured survey data.

The Risks of Poor Data Cleaning

Insufficient data cleaning can compromise research reliability in multiple ways.

Poor-quality data may lead to:

- distorted statistical outputs

- unstable segmentation models

- inconsistent trend analysis

- unreliable respondent profiling

- reduced methodological confidence

In qualitative research, weak cleaning processes may introduce artificial themes and misleading narratives into thematic analysis.

This is why many research teams increasingly view data cleaning as a core methodological requirement—not just a post-processing step.

Manual vs Automated Data Cleaning

Historically, much of data cleaning relied heavily on manual review.

Researchers manually evaluated:

- suspicious responses

- open-ended answers

- behavioral inconsistencies

- duplicate patterns

While manual review remains valuable, modern research scale increasingly requires automation.

Automated data cleaning systems now assist with:

- behavioral scoring

- response validation

- duplicate detection

- anomaly identification

- qualitative signal review

However, automation alone is not always sufficient.

AI-generated participation and sophisticated fraud behavior often require contextual interpretation beyond simple automated rules.

The Shift Toward Intelligence-Led Data Cleaning

As datasets become more complex, research teams are increasingly moving toward intelligence-powered quality-control systems.

Platforms such as BioBrain Insights reflect this transition through intelligence-powered and professionally-led research systems designed to strengthen data reliability beyond traditional database cleaning approaches.

Approaches such as the RRR Framework - focused on recency, relevance, and resonance - help filter meaningful and contextually aligned research signals from large datasets, while systems such as InstaQual support deeper evaluation of qualitative responses through transcript structuring, thematic synthesis, and contextual validation.

This reflects a broader industry movement away from isolated data cleaning tasks toward continuously evaluating data authenticity, contextual consistency, and methodological reliability throughout the research workflow itself.

Best Practices for Data Cleaning in Market Research

As online research environments evolve, several best practices are becoming increasingly important.

Use Layered Validation Systems

Relying on a single quality-control check is rarely sufficient.

Researchers increasingly combine:

- behavioral analysis

- logic validation

- response consistency review

- contextual evaluation

- qualitative signal analysis

to improve reliability.

Clean Data Continuously

Data cleaning should not occur only after fieldwork ends.

Continuous monitoring during collection helps identify problems earlier and reduces large-scale contamination risks.

Validate Open-Ended Responses Carefully

Open-ended responses now require deeper review due to the growth of AI-generated participation.

Qualitative validation is becoming increasingly important in modern research environments.

Prioritize Context, Not Just Rules

Rigid validation rules alone may remove legitimate respondents while allowing sophisticated fraud to remain undetected.

Modern database cleansing increasingly requires contextual interpretation.

The Future of Data Cleaning in Market Research

Data cleaning is rapidly evolving from a technical support task into a strategic component of research reliability.

Over the next few years, market research teams will likely adopt more advanced systems involving:

- AI-assisted validation

- behavioral authenticity scoring

- contextual response analysis

- integrated reliability frameworks

- real-time quality monitoring

The future of data cleaning will depend not only on removing invalid responses but on continuously evaluating whether research data remains authentic, relevant, and methodologically defensible throughout the research lifecycle.

Conclusion

Data cleaning in market research is no longer just an operational process it has become a foundational requirement for maintaining research reliability, analytical consistency, and methodological integrity in increasingly complex digital research environments.

As datasets become larger, faster, and more unstructured, research teams are facing growing pressure to manage challenges such as duplicate participation, low-engagement responses, AI-generated answers, panel overlap, and inconsistent qualitative signals. Traditional database cleaning and database cleansing methods alone are no longer sufficient to maintain trustworthy research data at scale.

This is where the industry is increasingly shifting toward intelligence-powered and professionally-led research systems capable of continuously evaluating data authenticity throughout the workflow itself. Platforms such as BioBrain Insights reflect this transition through approaches like the RRR Framework focused on recency, relevance, and resonance and qualitative intelligence systems such as InstaQual, which support deeper contextual validation, structured transcript analysis, thematic synthesis, and signal-level quality evaluation across modern research datasets.

As market research continues evolving, the future of data cleaning will depend less on isolated post-survey filtering and more on continuously assessing the reliability, relevance, and integrity of data throughout the entire research lifecycle.