Why Data Cleaning Techniques Matter More Than Ever

Modern market research generates enormous volumes of data every day.

Surveys, interviews, online panels, discussion communities, customer feedback systems and digital conversations continuously produce streams of quantitative and qualitative information. But raw data alone has very little value unless it can be trusted, structured, and analyzed accurately.

This is where data cleaning techniques become essential.

In today’s research environments, datasets often contain:

- Inconsistent formatting

- Incomplete responses

- Duplicate records

- Fragmented open-ended answers

- Irregular category structures

- Unstructured text data

- Anomalous response behavior

Without proper cleaning processes, research teams risk analyzing datasets that are statistically unstable, difficult to interpret, or methodologically inconsistent.

As online research becomes larger and more complex, data cleaning is no longer simply a technical support task. It has become a foundational process that directly affects research reliability, analytical quality, and dataset usability.

What Are Data Cleaning Techniques?

Data cleaning techniques are methods used to organize, standardize, structure, validate, and prepare raw research data before analysis begins.

These techniques help researchers improve:

- dataset consistency

- analytical readiness

- formatting reliability

- variable alignment

- qualitative organization

- statistical usability

In market research, data cleaning techniques are applied across both:

- quantitative datasets

- qualitative research environments

The goal is not simply to remove “bad data,” but to ensure research information becomes structurally usable and methodologically dependable.

Why Modern Research Requires More Advanced Cleaning Techniques

Traditional research datasets were relatively structured and manageable.

Most studies involved:

- smaller sample sizes

- simpler survey structures

- limited qualitative data

- fewer digital collection channels

Today’s research environments are completely different.

Modern datasets often include:

- multilingual responses

- open-ended narratives

- transcript-based interviews

- digital behavior signals

- large-scale online participation

- cross-platform research inputs

As a result, researchers increasingly require more sophisticated and layered cleaning workflows capable of handling both structured and unstructured information.

1. Variable Standardization

One of the most important data cleaning techniques in market research is variable standardization.

Raw datasets frequently contain inconsistent response formats.

For example:

- “USA”

- “United States”

- “US”

may all refer to the same category.

Similarly:

- “Male”

- “male”

- “M”

can create inconsistencies during analysis.

Variable standardization ensures that all responses follow consistent formatting and category structures throughout the dataset.

This technique improves:

- statistical accuracy

- segmentation consistency

- dashboard reporting reliability

2. Data Normalization

Normalization refers to organizing datasets into consistent formats that support easier analysis.

Researchers normalize elements such as:

- dates

- currencies

- percentages

- scales

- text capitalization

For example:

- “5/1/25”

- “01-May-2025”

- “2025-05-01”

must often be converted into one standardized date structure.

Normalization becomes especially important in multi-country and longitudinal research studies.

3. Duplicate Record Detection

Duplicate participation remains a major challenge in online market research.

Researchers use duplicate detection techniques to identify repeated entries through:

- participation history

- email similarity

- device signals

- response matching

- behavioral overlap

Duplicate cleaning helps maintain sample integrity and prevents response inflation.

In large-scale online research environments, even small volumes of duplicate participation can distort dataset reliability.

4. Open-Ended Response Structuring

One of the fastest-growing areas of market research data cleaning involves organizing qualitative responses.

Open-ended survey answers are often:

- fragmented

- inconsistent

- repetitive

- difficult to analyze at scale

Researchers increasingly use structuring techniques such as:

- thematic coding

- semantic clustering

- sentiment grouping

- topic tagging

- phrase normalization

These methods help convert free-text responses into analyzable research variables.

As qualitative research volumes continue increasing, open-ended structuring is becoming one of the most important data preparation processes in modern research workflows.

5. Missing Data Management

Most research datasets contain incomplete responses.

Participants may:

- skip questions

- abandon surveys midway

- provide partial demographic data

Researchers must decide whether to:

- remove incomplete records

- retain partial responses

- estimate missing values

- restructure variable dependencies

The correct approach depends on the research methodology and analytical objectives.

Effective missing-data management helps improve dataset continuity without compromising reliability.

6. Outlier Analysis

Outliers are responses that differ significantly from the rest of the dataset.

Examples may include:

- unrealistic purchase claims

- impossible usage frequencies

- abnormal spending values

- inconsistent behavioral patterns

Researchers evaluate whether outliers represent:

- genuine edge cases

- input errors

- structural inconsistencies

Outlier management helps improve analytical stability and statistical consistency.

7. Category Consolidation

Large datasets often contain fragmented response categories that require consolidation before analysis.

For example, respondents may describe similar behaviors using slightly different language.

Researchers therefore merge overlapping categories into standardized analytical groups.

This technique improves:

- tabulation clarity

- segmentation consistency

- trend readability

- dashboard usability

Category consolidation is especially important in large-scale open-ended research environments.

8. Qualitative Transcript Cleaning

Modern qualitative research increasingly involves:

- interview transcripts

- focus group discussions

- conversational datasets

- discussion-based research

Before analysis begins, transcripts often require cleaning processes such as:

- speaker separation

- filler-word removal

- timestamp alignment

- thematic organization

- contextual tagging

Transcript structuring has become increasingly important as qualitative research scales digitally.

9. Formatting and Structural Alignment

Research datasets frequently contain structural inconsistencies such as:

- broken column formatting

- inconsistent variable naming

- mixed scale structures

- fragmented tabulation layouts

Formatting alignment helps ensure datasets remain compatible across:

- statistical software

- dashboard systems

- visualization tools

- reporting environments

This step is critical for smooth downstream analysis workflows.

10. Behavioral Pattern Review

Modern research environments increasingly evaluate behavioral participation patterns during data cleaning workflows.

Researchers review signals such as:

- response pacing

- interaction consistency

- navigation flow

- engagement patterns

Behavioral review helps identify structurally inconsistent participation behavior before analysis begins.

This approach reflects the growing integration of quality-control thinking into data preparation itself.

Why Data Cleaning Is Becoming More Strategic

Historically, data cleaning was often treated as a final technical step before analysis.

That perspective is changing rapidly.

Today, research teams increasingly recognize that poor structuring and inconsistent preparation can compromise analysis long before insights are generated.

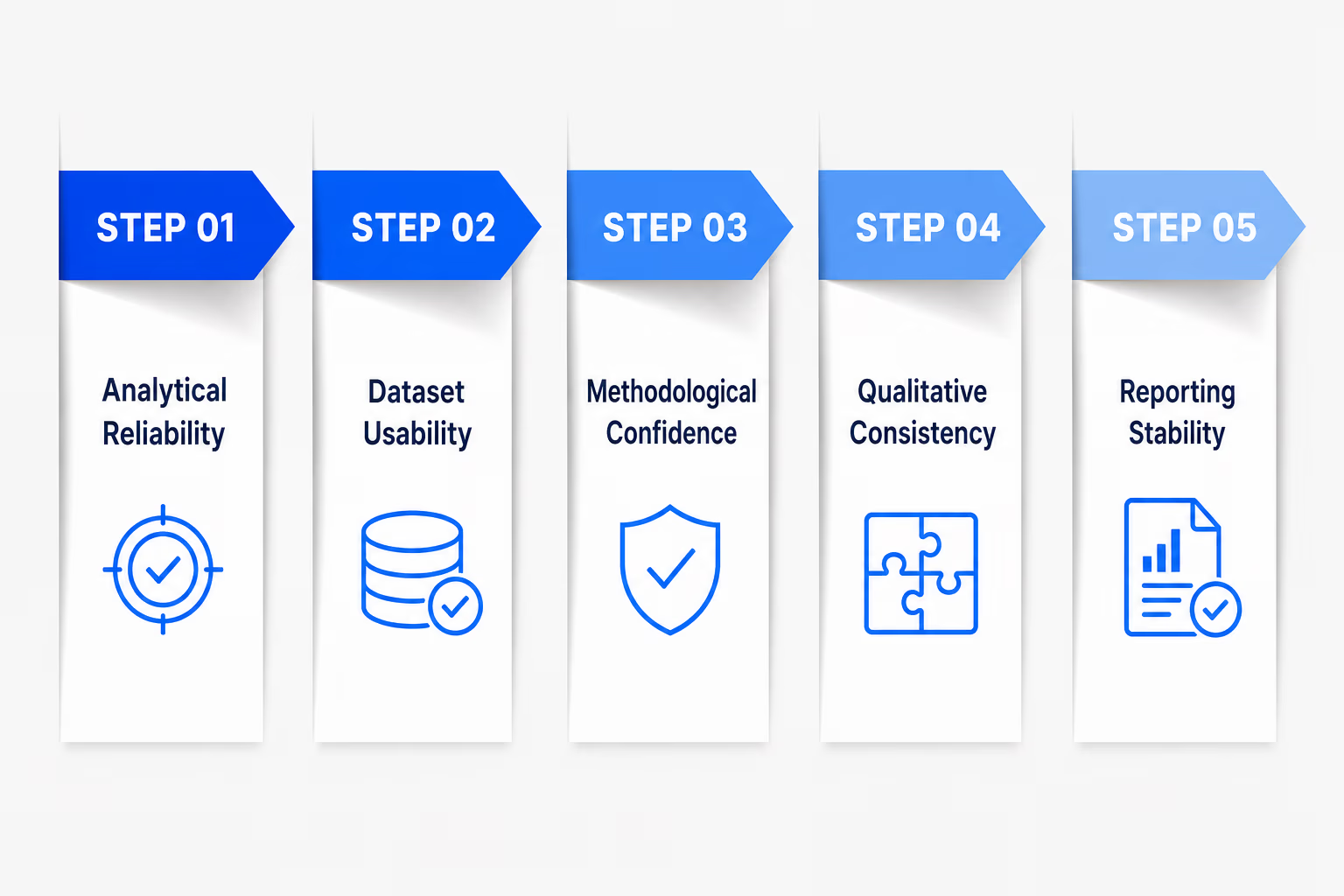

As datasets become larger and more unstructured, cleaning techniques now directly affect:

- analytical reliability

- dataset usability

- methodological confidence

- qualitative consistency

- reporting stability

This is why modern market research increasingly treats data cleaning as part of the broader research intelligence workflow.

The Rise of Intelligence-Led Data Structuring

Modern market research is moving beyond isolated spreadsheet correction toward more integrated and contextual data structuring systems.

Platforms such as BioBrain Insights reflect this transition through intelligence-powered and professionally-led research systems designed to improve dataset reliability and contextual consistency throughout the research workflow.

Approaches such as the RRR Framework - focused on recency, relevance, and resonance - support the identification of contextually meaningful research signals, while systems such as InstaQual help structure interviews, discussions, transcripts, and open-ended responses through thematic synthesis and qualitative organization workflows.

This reflects a broader industry movement toward continuously improving:

- analytical readiness

- contextual consistency

- qualitative integrity

- dataset usability

throughout modern research operations.

Best Practices for Using Data Cleaning Techniques

As research complexity increases, several best practices are becoming increasingly important.

• Clean Data Continuously

Data preparation should begin during fieldwork - not only after collection ends.

Continuous structuring improves workflow efficiency and reduces downstream correction requirements.

•Combine Quantitative and Qualitative Cleaning

Modern research increasingly requires both statistical structuring and contextual qualitative organization.

•Prioritize Consistency Across Variables

Consistent formatting and standardized categories improve long-term analytical stability.

•Structure Open-Ended Data Early

Waiting until reporting stages to organize qualitative responses creates unnecessary complexity.

Conclusion

Data cleaning techniques have become one of the most important components of modern market research workflows. As datasets become increasingly large, fragmented, and unstructured, research teams require more sophisticated approaches for preparing information before analysis begins.

From normalization and variable standardization to transcript structuring and thematic organization, modern data cleaning now involves far more than correcting spreadsheet errors. It has become a foundational process for improving dataset usability, analytical consistency, and methodological reliability across both quantitative and qualitative research environments.

This is why the industry is increasingly shifting toward intelligence-powered and professionally-led research systems capable of continuously structuring, organizing, and evaluating research data throughout the workflow itself. Platforms such as BioBrain Insights, through systems like the RRR Framework and InstaQual, reflect this broader movement toward more contextually aware, structured, and analytically dependable market research workflows designed for modern research environments.